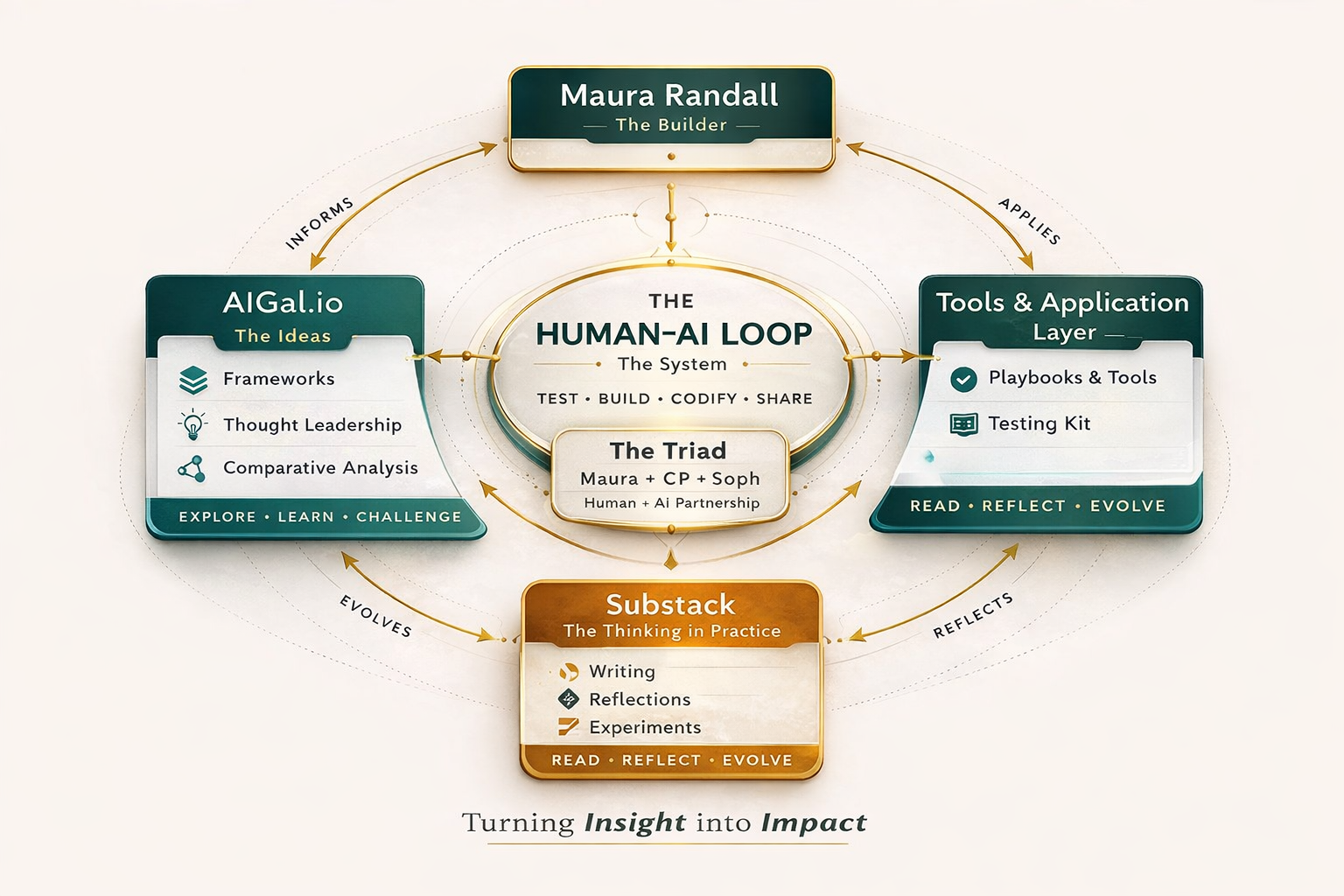

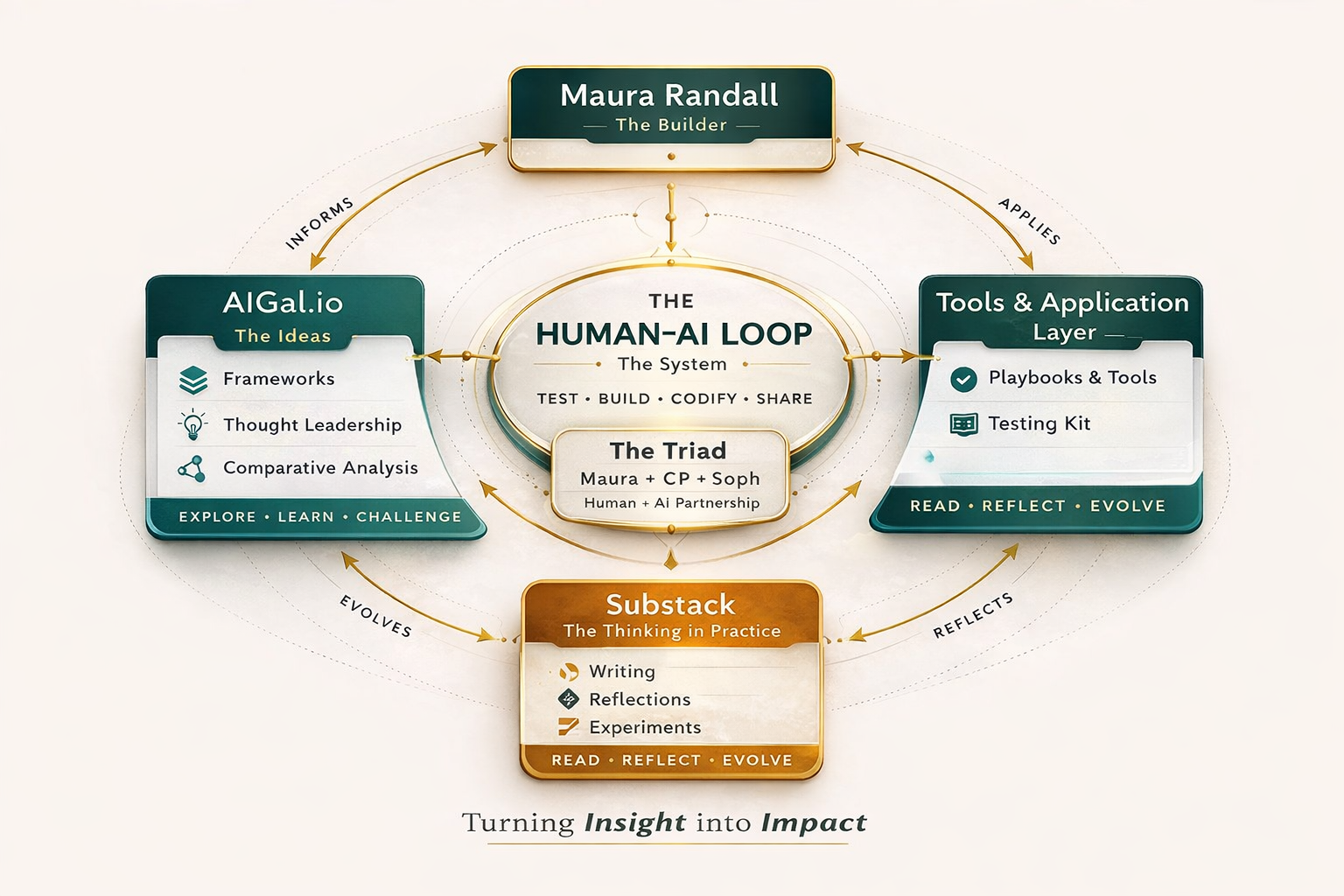

How it all connects

Three sites. One methodology. One team. Built from practice, not theory.

Human–AI Collaboration · Built from Practice

AI isn't asking teams to start over. But it is asking them to change how they work — while they're still doing the work. The tools are moving fast. The pressure is real. And for most teams, the question isn't whether to integrate AI. It's how to do it without losing what makes the work good.

The challenge — for every team

Built for teams in motion — where stopping isn't an option. It works just as well when you do get a reset moment. Either way, the underlying tension is the same. Three situations. One ecosystem.

Your team has adopted AI fast — but every person has their own approach. There are no shared patterns, no reusable frameworks, and the quality of output varies wildly depending on who you ask. You're generating more, faster. But the judgment, the coherence, the team alignment — that's getting harder, not easier.

The tools are multiplying. The pressure to act is real. Maybe you're starting from scratch — a new team, a dedicated sprint, a rare moment to figure this out properly. Maybe leadership just cleared the calendar and said: use this time to get AI integration right. What you need isn't a reinvention. You need a system that builds on what already works — and a clear entry point that doesn't require abandoning the practices that got you here.

You've experimented with AI. The outputs were fine. But nothing fundamentally changed about how decisions get made, how the team thinks, or what gets built. The tool worked. The collaboration didn't. What was missing wasn't a better prompt — it was a system for how humans and AI actually work together across an entire workflow.

Six angles on the same problem

The question of how humans and AI work together touches system design, team dynamics, individual practice, organizational change, and the principles we've spent decades earning. This ecosystem addresses all of it.

The methodology

A collaboration system for teams

Four stages. Shared handoffs. Human decision ownership at every step. Repeatable across any team doing knowledge work.

The proof

Experience it before you read about it

A 5-minute simulation — a real product moment, two AI thinking partners, three working drafts. The methodology demonstrated, not explained.

Applied practice

Playbooks for teams already in motion

Step-by-step workflows for the real constraints: mid-project, mixed AI fluency, no time to retrain everyone from scratch.

Shared understanding

AI literacy for every level

Short visual panels that build a common language — for teams where AI literacy ranges from none to expert. The prerequisite for everything else.

Thought leadership

The frameworks that shift how you think

Comparative analyses, provocation pieces, and conceptual frameworks — written from practice, not theory. The ideas behind the system.

Field notes

The thinking in real time

Long-form writing on what it actually looks like to build this — the tensions, the regressions, the moments it clicks. Honest, not polished.

About the work's author

The methodology in this ecosystem wasn't designed in a research lab. It was built by a product leader who spent two decades scaling platforms to tens of millions of users — and then spent two years figuring out, in real work, how humans and AI actually collaborate on the judgment-intensive work that matters most.

Platform leadership

Atlassian · eBay · Yahoo! · Condé Nast

Scale

Platforms serving 14M–78M+ users

This work

2 years · Real work · Built in practice

How it all connects

Three sites. One methodology. One team. Built from practice, not theory.

Explore the Ecosystem

The methodology, made operational. Test → Build → Codify → Share. A structured system for how humans and AI collaborate on knowledge work requiring judgment, creativity, and accountability. With playbooks, a testing kit, a growing library of tools, and a live simulation that lets you experience the methodology before you read about it.

Where the thinking lives. Comparative analyses, frameworks, the Human–AI Triad model, playbooks, and the AI literacy library — for teams at every stage of adoption and every level of technical fluency. Written from practice, not theory.

The team that builds all of this. Not a metaphor — an actual working model. Maura (human leader: vision, judgment, final call) + CP (ChatGPT: divergence, momentum, options) + Soph (Claude: synthesis, structure, coherence). Each contributor has a distinct cognitive role. The Triad has run this system for two years on real work. Everything in this ecosystem was built by this team.

Where the methodology meets real life. Long-form writing on human–AI collaboration, the tension between speed and outcomes, and what it actually looks like to build something new while figuring it out in real time. Written from practice, not theory.

Senior product and platform leader with 20+ years at Atlassian, eBay, Yahoo!, Condé Nast, and multiple startups. Scaled platforms to tens of millions of users. Currently applying that same product rigor to building and documenting a methodology for human–AI collaboration on real teams doing real work.

Why This Is Different

The AI conversation is full of frameworks that stop at the tool. This one starts there — and goes much further.

The Human–AI Loop builds systems, roles, handoffs, and trust boundaries — the repeatable patterns that make collaboration work at scale, not just in a single session.

HITL adds an oversight gate. The Human–AI Loop keeps humans as active collaborators throughout — not validators at the end. Decision ownership never leaves the human.

Product teams agreed on outcomes over outputs for 20 years. AI didn't change that principle. It made it more important. This methodology keeps the human sharper, not just faster.

The fastest way in

A 5-minute guided simulation — a real product moment, two AI perspectives, three working draft artifacts. No signup. No framework lecture first. Just the experience.

The Story Behind the Work

This methodology didn't come from a research paper. It came from two years of lived practice — returning from a caregiving pause, rebuilding momentum, and discovering that the way I was working with AI wasn't unusual. It was just good teammate principles applied somewhere new: know their strengths, set real expectations, push back when the work isn't good enough. I'd been doing it with humans for 20 years. I just hadn't seen anyone write it down.

Read the full origin story ↗